1. Brief summary

I decided to deploy new ELK server in kubernetes. Installation was with the help of Helm using some custom values.yaml. As a result I got 3 elasticsearch pods controlled by StatefulSet, 1 kibana pod and 1 apm-server pod.

The task is to move all indices from an old elasticsearch server to elasticsearch cluster in k8s. That’s about 100Gi of indices with millions of docs inside. To accomplish this I used kibana Snapshot and Restore plugin. It is easy to use but requires some configuration on both servers.

2. Snapshot Configuration

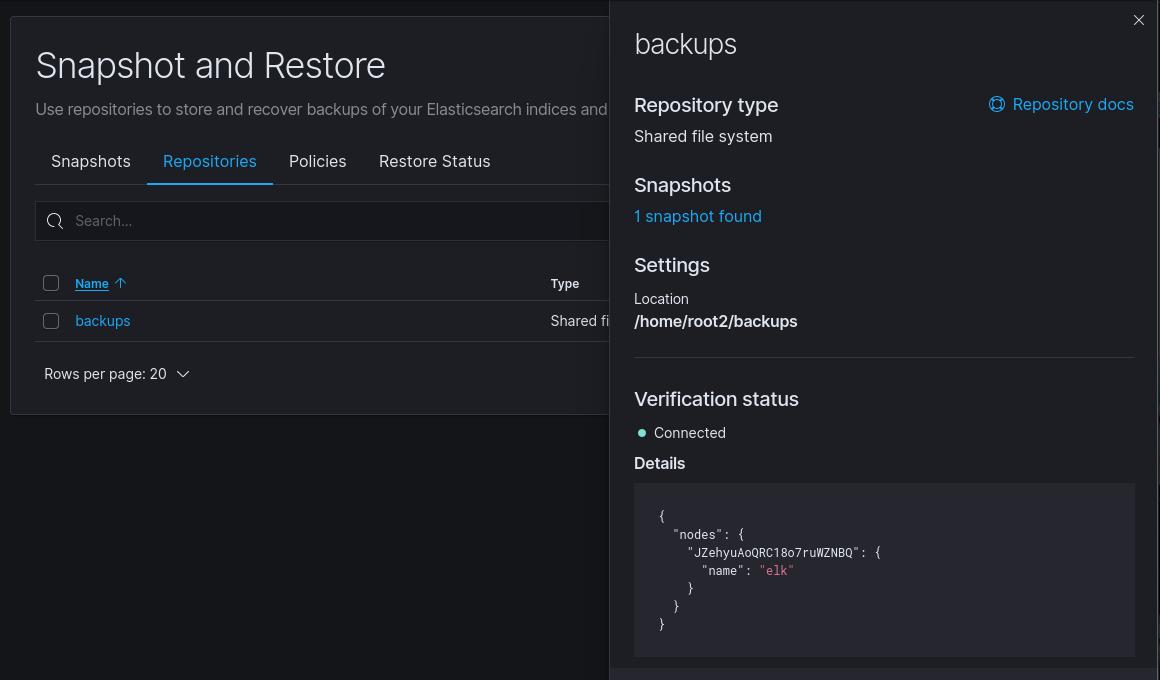

So let’s begin with the configuration of snapshots on the source elastic server. First we need to backup all necessary indices. Navigate to your Kibana source (old) server -> Management -> Snapshot and Restore. Now we need to Register repository. Elasticsearch supports file system and read-only URL repositories. We going to use Shared file system type.

Set repository name , select Shared file system -> Next.

Now we need to set “File system location“. The location must be registered in the path.repo setting on all master and data nodes.

To pass the snapshot repository path, you must first add the system’s path or the parent directory to the path.repo entry in elasticsearch.yml

The path.repo entry should look similar to:

path.repo: ["/home/root/backups"]

You can find the Elasticsearch configuration file located in /etc/elasticsearch/elasticsearch.yml

NOTE: After adding the path.repo, you may need to restart Elasticsearch clusters. Additionally, the values supported for path.repo may vary wildly depending on the platform running Elasticsearch.

Now get back to Kibana Register repository and set File system location to /home/root/backups (indicated in path.repo) and click Register. Let’s check if everything went fine. Click on the repository you’ve added and navigate to Verification status, you should see green status Connected.

Next we create snapshot policy in the Policies tab. You can configure policy as you wish, but take a look at step 2 “Data streams and indices”. You can backup all your indices, including system indices, or select specific ones passing Index patterns, i.e logstash-*. When policy is created you can start Snapshot. It can take some time and finally all your indices are backed up at /home/root/backups folder.

Next we create snapshot policy in the Policies tab. You can configure policy as you wish, but take a look at step 2 “Data streams and indices”. You can backup all your indices, including system indices, or select specific ones passing Index patterns, i.e logstash-*. When policy is created you can start Snapshot. It can take some time and finally all your indices are backed up at /home/root/backups folder.

3. Restore Configuration

And now the fun part starts. Let’s configure snapshot and restore on elastic k8s cluster.

We need to set also path.repo in elasticsearch.yml, but we have 3 nodes cluster. To do this, we can simply set it in StatefulSet. Navigate to elasticsearch StatefulSet and edit it. You need to paste this to container env:

- name: path.repo value: /usr/share/elasticsearch/data/snapshot

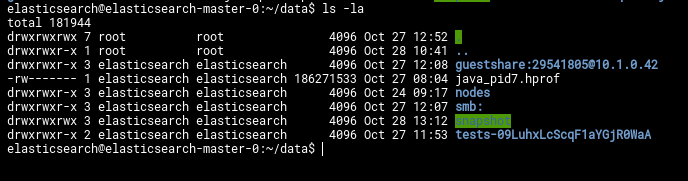

But it is not enough yet to configure snapshots. Let’s create that /usr/share/elasticsearch/data/snapshot folder. First we need to find where is those volumes located on the host machine.

kubectl get pv kubectl describe pv pvc-64cb553b-60e9-465d-a187-74de3d585db6 #one of the elasticsearch PV's.

In the output you will see something like this

Path: /var/snap/microk8s/common/default-storage/default-elasticsearch-master-elasticsearch-master-0-pvc-64cb553b-60e9-465d-a187-74de3d585db6

create new snapshot directory inside the PV on all 3 nodes.

mkdir /var/snap/microk8s/common/default-storage/default-elasticsearch-master-elasticsearch-master-0-pvc-64cb553b-60e9-465d-a187-74de3d585db6/snapshot

grant 1000(elasticsearch) user and group to snapshot folder

chown -R 1000:1000 /var/snap/microk8s/common/default-storage/default-elasticsearch-master-elasticsearch-master-0-pvc-64cb553b-60e9-465d-a187-74de3d585db6/snapshot

Now you can save StatefulSet, it will recreate all 3 elastic pods, you can enter inside the pod and check new snapshot folder there.

But that’s not all, now all 3 nodes have the snapshot folder, but they can’t access it on other nodes.

To fix this we need to create NFS shared folder on Source server and new PV nad PVC volume for the Destination elasticsearch k8s server.

Let’s start with NFS share on Source elastic server.

sudo apt update sudo apt install nfs-kernel-server

On the Destination elastic k8s server

On the client server, we need to install a package called nfs-common, which provides NFS functionality without including any server components. Again, refresh the local package index prior to installation to ensure that you have up-to-date information:

sudo apt update sudo apt install nfs-common

Now that both servers have the necessary packages, we can start configuring them.

Creating the Share Directories on the Host

We already created our /home/root/backups directory. Now let’s configure NFS exports on the source host.

sudo nano /etc/exports

/home/root/backups *(rw,sync,no_subtree_check)

sudo systemctl restart nfs-kernel-server

At this point NFS share is configured.

Move to destination elastic server and create 2 files pv.yaml and pvc.yaml , paste the content below

pv.yaml

apiVersion: v1

kind: PersistentVolume

metadata:

name: nfs

spec:

capacity:

storage: 100Gi

accessModes:

- ReadWriteMany

nfs:

server: source_elastic_ip_server # Please change this to your NFS server

path: "/home/root/backups"pvc.yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: nfs

spec:

accessModes:

- ReadWriteMany

storageClassName: ""

resources:

requests:

storage: 100Gi # volume size requested

volumeName: nfsApply the files

kubectl apply -f pv.yaml kubectl apply -f pvc.yaml kubectl get pv kubectl get pvc

You should see them. Now let’s modify again StatefulSet and add pvc to container section

volumes: - name: nfs persistentVolumeClaim: claimName: nfs

In volumeMounts serction add our nfs share

- name: nfs mountPath: /usr/share/elasticsearch/data/snapshot

Save StatefulSet and it will recreate 3 elastic pods. Navigate to Destination host Kibana and continue configuring Snapshot and Restore.

Create new repository and set File system location to path /usr/share/elasticsearch/data/snapshot. When repository created you should see new snapshot record in Snapshot tab.

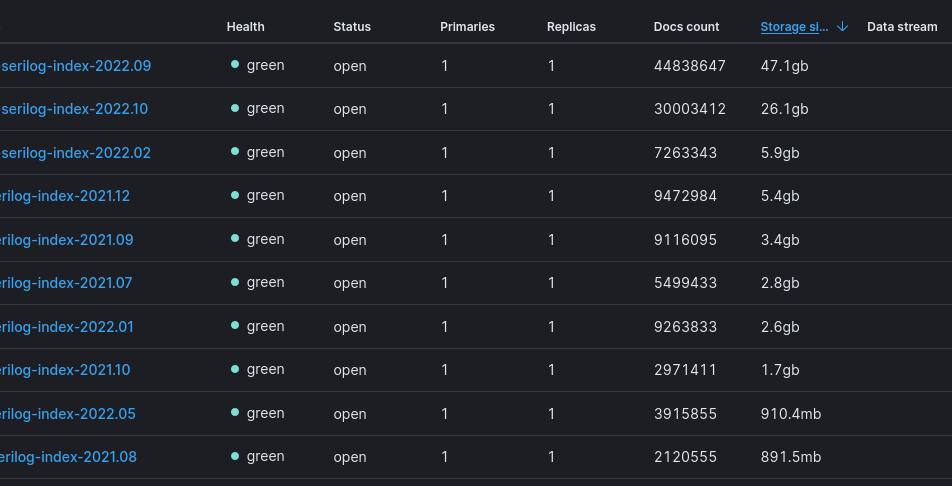

All that’s left is to press restore button and start restore. After some time navigate to Index Management and check for the new inidices.

That’s it.

NOTE: You can receive some exception and “No shard error” after restoration. That’s probably the issue with insufficient resources of elastic pods. You can add some additional resources again in StatefulSet resources: section

resources:

limits:

cpu: '1'

memory: 1024M

requests:

cpu: 100m

memory: 1024MRerun restoration and that should fix the problem